108.413A:

Computational Linguistics

Hyopil Shin (Graduate School of Data Science and Dept. of Linguistics, Seoul National University)

hpshin@snu.ac.kr, http://knlp.snu.ac.kr

Tue/Thur 11:00 to 12:15 in building 3 room 102

T.A: 이상아 (visualjan@snu.ac.kr), 장한솔 (624jhs@snu.ac.kr)

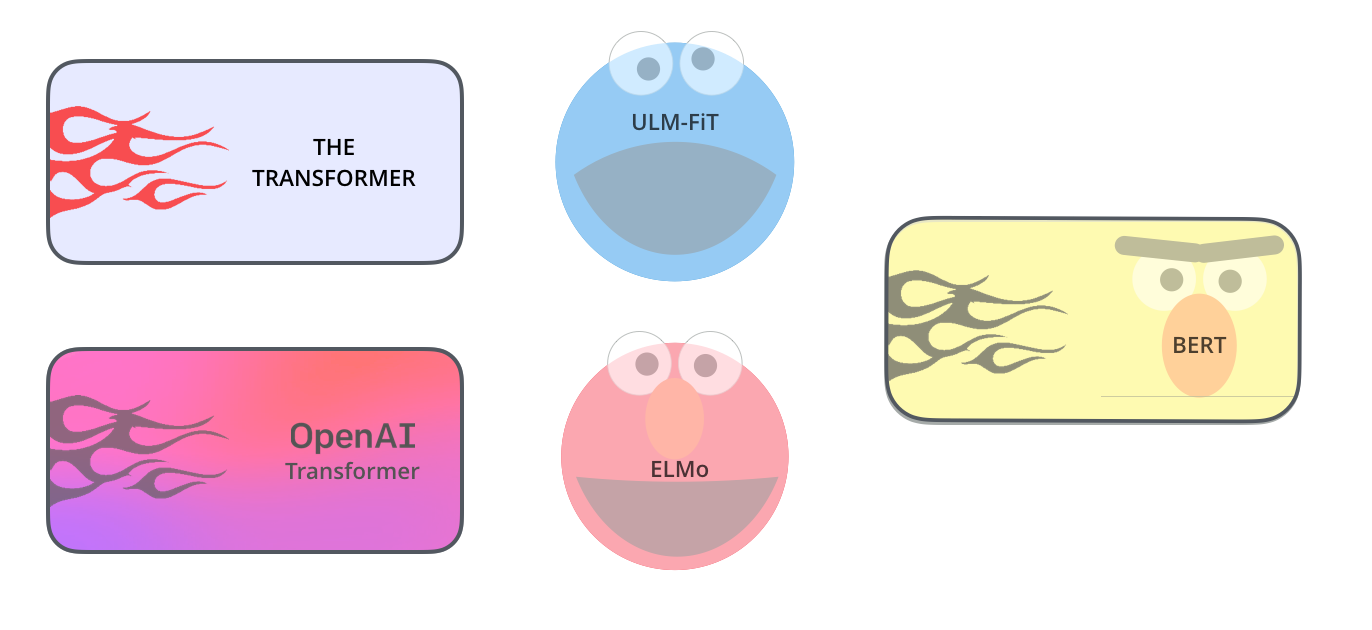

(http://www.theverge.com/2016/3/11/11208078/lee-se-dol-go-google-kasparov-jennings-ai) ( http://jalammar.github.io/illustrated-bert/)

Course Description

Recently deep learning approaches have obtained very high performance across many different computational linguistics or Natural Language Processing (NLP). This course provides an introduction to the Neural Network Model and deep learning methodologies applied to NLP from scratch. On the model side we will start from basic notions of Neural Networks such as linear/logistic regression, perceptrons, backpropagations, and parameter optimizations. Then we will cover actual Neural Network models including Feedforward, Convolutional, Recurrent, and Long Short Term Neural Networks. Along the lines of the models, various word/sentence/contextual embeddings and attention mechanism will also be dealt with in depth. The first part of the class focuses on the basics of neural network models and the second part covers actual implementations of NLP tasks such as sentiment analysis(movie/text classifications), and text generations. We will take advantage of modules from Python 3.x and PyTorch. Through lectures and programming assignments students will learn the necessary implementation tricks for making neural networks work on practical problems.

Updates

- 코로나 19로 인해 3월 17일부터

Zoom을 사용한 온라인 수업을 시행합니다. https://zoom.us/ 에서 다운받으시길 바랍니다.

- 수업시작전에 ETL 공지사항에 클래스 초대 url을 명시할 예정입니다. 이 경로를 따라 들어오시면 됩니다.

- Please set up python,

pytorch, and colab for class!

Useful Sites

- Lectures

- Other Resources

Textbook and Sites

Deep Learning from

Scratch (밑바닥부터 시작하는 딥러닝), by 사이토 고키, 한빛출판사. Deep Learning From Scratch source

codes